The Art of Ignoring Most of the World

— William Blake

Reality seems to enjoy excess. Pixels, tokens, frequencies, variables. Every dataset arrives swollen with dimensions, as if the universe believes that the more coordinates it provides, the more impressive it will look. Machine learning is less sentimental. It spends a great deal of its time trying to quietly remove most of them.

This activity is known, somewhat politely, as dimensionality reduction.

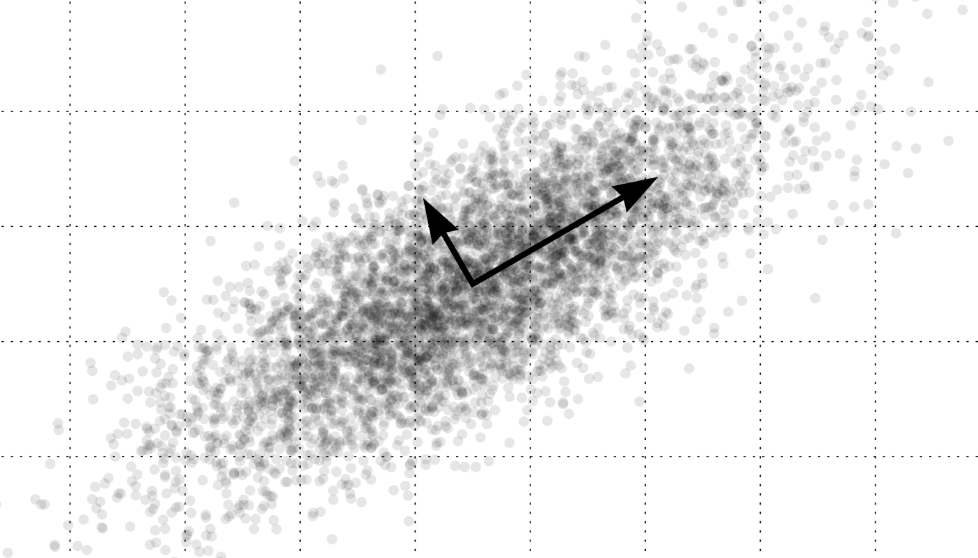

The idea is simple. Our observations often appear in enormous dimensional spaces, but the meaningful structure inside them is usually much smaller. An image may contain millions of pixels, yet not every possible arrangement of those pixels corresponds to something that could plausibly occur in the world. Language offers another example. The space of all possible sentences is astronomically large, but human language occupies only a tiny, structured corner of it. Real data tends to gather along thin surfaces hidden inside vast mathematical spaces.

Dimensionality reduction is the attempt to discover those surfaces and ignore the rest.

Algorithms do this with varying degrees of elegance. PCA rotates the coordinate system until most variation collapses into a few directions. Autoencoders force observations through narrow internal bottlenecks and hope that whatever survives must have been important. Modern embedding models translate images, sounds, and sentences into vectors where distance quietly becomes meaning. The official explanation is that we compress data while preserving structure. In practice, we make a bold assumption that the world contains far less independent variation than it initially pretends to.

Surprisingly often, this assumption turns out to be correct. The world seems to enjoy hiding its complexity behind relatively small numbers of governing patterns. Planetary motion reduces to a few parameters. Physical laws compress oceans of observation into short equations. Even perception behaves this way. The visual system receives millions of signals from the retina and calmly summarizes them as objects, faces, and movement. It performs dimensionality reduction with a confidence that modern machine learning systems can only admire.

Human thinking does the same thing constantly. Faced with overwhelming detail, the mind selects a few dimensions that appear meaningful and ignores everything else. Psychologists call these structures schemas or categories. In daily life we simply call them understanding. They are extremely useful. They are also occasionally wrong. Dimensionality reduction can produce both insight and stereotype. The technique itself remains innocent.

Cultures perform their own versions of this compression. Western intellectual traditions have often favored classification. Aristotle divided the world into categories and species. Later scientific traditions continued this habit by searching for clear definitions and discrete types. Complexity becomes manageable when the right categories are found.

Chinese philosophical traditions approached the problem differently. Rather than dividing the world into fixed classes, many classical frameworks describe reality through dynamic relations. Yin and yang compress the enormous diversity of natural phenomena into two interacting tendencies. Darkness and light, stillness and motion, contraction and expansion. The world becomes intelligible through balance rather than taxonomy. It is another way of reducing dimensions.

Seen from this perspective, dimensionality reduction begins to look less like a technical trick and more like a fundamental strategy of intelligence. Science does it when it writes equations. Perception does it when it recognizes a face. Culture does it when it organizes the world through symbols and principles. Machine learning merely implements the same habit in code.

Personally, I find something strangely beautiful about this. The world appears impossibly complicated at first glance, filled with more variation than any mind or machine could hope to grasp. And yet again and again we discover that beneath the noise lie only a few dimensions that truly matter.

Understanding, in the end, may simply be the moment when we realize how much of the world can be safely ignored.